Release history¶

Version 0.17.1¶

Changelog¶

Bug fixes¶

- Upgrade vendored joblib to version 0.9.4 that fixes an important bug in

joblib.Parallelthat can silently yield to wrong results when working on datasets larger than 1MB: https://github.com/joblib/joblib/blob/0.9.4/CHANGES.rst- Fixed reading of Bunch pickles generated with scikit-learn version <= 0.16. This can affect users who have already downloaded a dataset with scikit-learn 0.16 and are loading it with scikit-learn 0.17. See #6196 for how this affected

datasets.fetch_20newsgroups. By Loic Esteve.- Fixed a bug that prevented using ROC AUC score to perform grid search on several CPU / cores on large arrays. See #6147 By Olivier Grisel.

- Fixed a bug that prevented to properly set the

presortparameter inensemble.GradientBoostingRegressor. See #5857 By Andrew McCulloh.- Fixed a joblib error when evaluating the perplexity of a

decomposition.LatentDirichletAllocationmodel. See #6258 By Chyi-Kwei Yau.

Version 0.17¶

Changelog¶

New features¶

- All the Scaler classes but

RobustScalercan be fitted online by calling partial_fit. By Giorgio Patrini.- The new class

ensemble.VotingClassifierimplements a “majority rule” / “soft voting” ensemble classifier to combine estimators for classification. By Sebastian Raschka.- The new class

preprocessing.RobustScalerprovides an alternative topreprocessing.StandardScalerfor feature-wise centering and range normalization that is robust to outliers. By Thomas Unterthiner.- The new class

preprocessing.MaxAbsScalerprovides an alternative topreprocessing.MinMaxScalerfor feature-wise range normalization when the data is already centered or sparse. By Thomas Unterthiner.- The new class

preprocessing.FunctionTransformerturns a Python function into aPipeline-compatible transformer object. By Joe Jevnik.- The new classes

cross_validation.LabelKFoldandcross_validation.LabelShuffleSplitgenerate train-test folds, respectively similar tocross_validation.KFoldandcross_validation.ShuffleSplit, except that the folds are conditioned on a label array. By Brian McFee, Jean Kossaifi and Gilles Louppe.decomposition.LatentDirichletAllocationimplements the Latent Dirichlet Allocation topic model with online variational inference. By Chyi-Kwei Yau, with code based on an implementation by Matt Hoffman. (#3659)- The new solver

sagimplements a Stochastic Average Gradient descent and is available in bothlinear_model.LogisticRegressionandlinear_model.Ridge. This solver is very efficient for large datasets. By Danny Sullivan and Tom Dupre la Tour. (#4738)- The new solver

cdimplements a Coordinate Descent indecomposition.NMF. Previous solver based on Projected Gradient is still available setting new parametersolvertopg, but is deprecated and will be removed in 0.19, along withdecomposition.ProjectedGradientNMFand parameterssparseness,eta,betaandnls_max_iter. New parametersalphaandl1_ratiocontrol L1 and L2 regularization, andshuffleadds a shuffling step in thecdsolver. By Tom Dupre la Tour and Mathieu Blondel.- IndexError bug #5495 when doing OVR(SVC(decision_function_shape=”ovr”)). Fixed by Elvis Dohmatob.

Enhancements¶

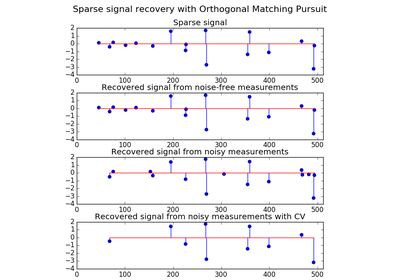

manifold.TSNEnow supports approximate optimization via the Barnes-Hut method, leading to much faster fitting. By Christopher Erick Moody. (#4025)cluster.mean_shift_.MeanShiftnow supports parallel execution, as implemented in themean_shiftfunction. By Martino Sorbaro.naive_bayes.GaussianNBnow supports fitting withsample_weights. By Jan Hendrik Metzen.dummy.DummyClassifiernow supports a prior fitting strategy. By Arnaud Joly.- Added a

fit_predictmethod formixture.GMMand subclasses. By Cory Lorenz.- Added the

metrics.label_ranking_lossmetric. By Arnaud Joly.- Added the

metrics.cohen_kappa_scoremetric.- Added a

warm_startconstructor parameter to the bagging ensemble models to increase the size of the ensemble. By Tim Head.- Added option to use multi-output regression metrics without averaging. By Konstantin Shmelkov and Michael Eickenberg.

- Added

stratifyoption tocross_validation.train_test_splitfor stratified splitting. By Miroslav Batchkarov.- The

tree.export_graphvizfunction now supports aesthetic improvements fortree.DecisionTreeClassifierandtree.DecisionTreeRegressor, including options for coloring nodes by their majority class or impurity, showing variable names, and using node proportions instead of raw sample counts. By Trevor Stephens.- Improved speed of

newton-cgsolver inlinear_model.LogisticRegression, by avoiding loss computation. By Mathieu Blondel and Tom Dupre la Tour.- The

class_weight="auto"heuristic in classifiers supportingclass_weightwas deprecated and replaced by theclass_weight="balanced"option, which has a simpler forumlar and interpretation. By Hanna Wallach and Andreas Müller.- Add

class_weightparameter to automatically weight samples by class frequency forlinear_model.PassiveAgressiveClassifier. By Trevor Stephens.- Added backlinks from the API reference pages to the user guide. By Andreas Müller.

- The

labelsparameter tosklearn.metrics.f1_score,sklearn.metrics.fbeta_score,sklearn.metrics.recall_scoreandsklearn.metrics.precision_scorehas been extended. It is now possible to ignore one or more labels, such as where a multiclass problem has a majority class to ignore. By Joel Nothman.- Add

sample_weightsupport tolinear_model.RidgeClassifier. By Trevor Stephens.- Provide an option for sparse output from

sklearn.metrics.pairwise.cosine_similarity. By Jaidev Deshpande.- Add

minmax_scaleto provide a function interface forMinMaxScaler. By Thomas Unterthiner.dump_svmlight_filenow handles multi-label datasets. By Chih-Wei Chang.- RCV1 dataset loader (

sklearn.datasets.fetch_rcv1). By Tom Dupre la Tour.- The “Wisconsin Breast Cancer” classical two-class classification dataset is now included in scikit-learn, available with

sklearn.dataset.load_breast_cancer.- Upgraded to joblib 0.9.3 to benefit from the new automatic batching of short tasks. This makes it possible for scikit-learn to benefit from parallelism when many very short tasks are executed in parallel, for instance by the

grid_search.GridSearchCVmeta-estimator withn_jobs > 1used with a large grid of parameters on a small dataset. By Vlad Niculae, Olivier Grisel and Loic Esteve.- For more details about changes in joblib 0.9.3 see the release notes: https://github.com/joblib/joblib/blob/master/CHANGES.rst#release-093

- Improved speed (3 times per iteration) of

decomposition.DictLearningwith coordinate descent method fromlinear_model.Lasso. By Arthur Mensch.- Parallel processing (threaded) for queries of nearest neighbors (using the ball-tree) by Nikolay Mayorov.

- Allow

datasets.make_multilabel_classificationto output a sparsey. By Kashif Rasul.cluster.DBSCANnow accepts a sparse matrix of precomputed distances, allowing memory-efficient distance precomputation. By Joel Nothman.tree.DecisionTreeClassifiernow exposes anapplymethod for retrieving the leaf indices samples are predicted as. By Daniel Galvez and Gilles Louppe.- Speed up decision tree regressors, random forest regressors, extra trees regressors and gradient boosting estimators by computing a proxy of the impurity improvement during the tree growth. The proxy quantity is such that the split that maximizes this value also maximizes the impurity improvement. By Arnaud Joly, Jacob Schreiber and Gilles Louppe.

- Speed up tree based methods by reducing the number of computations needed when computing the impurity measure taking into account linear relationship of the computed statistics. The effect is particularly visible with extra trees and on datasets with categorical or sparse features. By Arnaud Joly.

ensemble.GradientBoostingRegressorandensemble.GradientBoostingClassifiernow expose anapplymethod for retrieving the leaf indices each sample ends up in under each try. By Jacob Schreiber.- Add

sample_weightsupport tolinear_model.LinearRegression. By Sonny Hu. (#4481)- Add

n_iter_without_progresstomanifold.TSNEto control the stopping criterion. By Santi Villalba. (#5185)- Added optional parameter

random_stateinlinear_model.Ridge, to set the seed of the pseudo random generator used insagsolver. By Tom Dupre la Tour.- Added optional parameter

warm_startinlinear_model.LogisticRegression. If set to True, the solverslbfgs,newton-cgandsagwill be initialized with the coefficients computed in the previous fit. By Tom Dupre la Tour.- Added

sample_weightsupport tolinear_model.LogisticRegressionfor thelbfgs,newton-cg, andsagsolvers. By Valentin Stolbunov.- Added optional parameter

presorttoensemble.GradientBoostingRegressorandensemble.GradientBoostingClassifier, keeping default behavior the same. This allows gradient boosters to turn off presorting when building deep trees or using sparse data. By Jacob Schreiber.- Altered

metrics.roc_curveto drop unnecessary thresholds by default. By Graham Clenaghan.- Added

feature_selection.SelectFromModelmeta-transformer which can be used along with estimators that have coef_ or feature_importances_ attribute to select important features of the input data. By Maheshakya Wijewardena, Joel Nothman and Manoj Kumar.- Added

metrics.pairwise.laplacian_kernel. By Clyde Fare.covariance.GraphLassoallows separate control of the convergence criterion for the Elastic-Net subproblem via theenet_tolparameter.- Improved verbosity in

decomposition.DictionaryLearning.ensemble.RandomForestClassifierandensemble.RandomForestRegressorno longer explicitly store the samples used in bagging, resulting in a much reduced memory footprint for storing random forest models.- Added

positiveoption tolinear_model.Larsandlinear_model.lars_pathto force coefficients to be positive. (#5131 <https://github.com/scikit-learn/scikit-learn/pull/5131>)- Added the

X_norm_squaredparameter tometrics.pairwise.euclidean_distancesto provide precomputed squared norms forX.- Added the

fit_predictmethod topipeline.Pipeline.- Added the

preprocessing.min_max_scalefunction.

Bug fixes¶

- Fixed non-determinism in

dummy.DummyClassifierwith sparse multi-label output. By Andreas Müller.- Fixed the output shape of

linear_model.RANSACRegressorto(n_samples, ). By Andreas Müller.- Fixed bug in

decomposition.DictLearningwhenn_jobs < 0. By Andreas Müller.- Fixed bug where

grid_search.RandomizedSearchCVcould consume a lot of memory for large discrete grids. By Joel Nothman.- Fixed bug in

linear_model.LogisticRegressionCVwhere penalty was ignored in the final fit. By Manoj Kumar.- Fixed bug in

ensemble.forest.ForestClassifierwhile computing oob_score and X is a sparse.csc_matrix. By Ankur Ankan.- All regressors now consistently handle and warn when given

ythat is of shape(n_samples, 1). By Andreas Müller.- Fix in

cluster.KMeanscluster reassignment for sparse input by Lars Buitinck.- Fixed a bug in

lda.LDAthat could cause asymmetric covariance matrices when using shrinkage. By Martin Billinger.- Fixed

cross_validation.cross_val_predictfor estimators with sparse predictions. By Buddha Prakash.- Fixed the

predict_probamethod oflinear_model.LogisticRegressionto use soft-max instead of one-vs-rest normalization. By Manoj Kumar. (#5182)- Fixed the

partial_fitmethod oflinear_model.SGDClassifierwhen called withaverage=True. By Andrew Lamb. (#5282)- Dataset fetchers use different filenames under Python 2 and Python 3 to avoid pickling compatibility issues. By Olivier Grisel. (#5355)

- Fixed a bug in

naive_bayes.GaussianNBwhich caused classification results to depend on scale. By Jake Vanderplas.- Fixed temporarily

linear_model.Ridge, which was incorrect when fitting the intercept in the case of sparse data. The fix automatically changes the solver to ‘sag’ in this case. (#5360) By Tom Dupre la Tour.- Fixed a performance bug in

decomposition.RandomizedPCAon data with a large number of features and fewer samples. (#4478) By Andreas Müller, Loic Esteve and Giorgio Patrini.- Fixed bug in

cross_decomposition.PLSthat yielded unstable and platform dependent output, and failed on fit_transform. By Arthur Mensch.- Fixes to the

Bunchclass used to store datasets.- Fixed

ensemble.plot_partial_dependenceignoring thepercentilesparameter.- Providing a

setas vocabulary inCountVectorizerno longer leads to inconsistent results when pickling.- Fixed the conditions on when a precomputed Gram matrix needs to be recomputed in

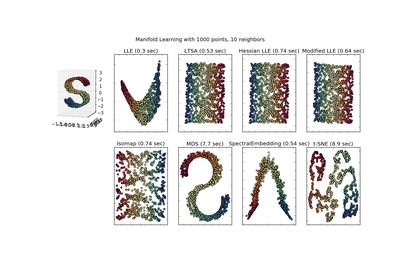

linear_model.LinearRegression,linear_model.OrthogonalMatchingPursuit,linear_model.Lassoandlinear_model.ElasticNet.- Fixed inconsistent memory layout in the coordinate descent solver that affected

linear_model.DictionaryLearningandcovariance.GraphLasso. (#5337 <https://github.com/scikit-learn/scikit-learn/pull/5337>) By Olivier Grisel.manifold.LocallyLinearEmbeddingno longer ignores theregparameter.- Nearest Neighbor estimators with custom distance metrics can now be pickled. (4362 <https://github.com/scikit-learn/scikit-learn/pull/4362>)

- Fixed a bug in

pipeline.FeatureUnionwheretransformer_weightswere not properly handled when performing grid-searches.- Fixed a bug in

linear_model.LogisticRegressionandlinear_model.LogisticRegressionCVwhen usingclass_weight='balanced'```or ``class_weight='auto'. By Tom Dupre la Tour.

API changes summary¶

- Attribute data_min, data_max and data_range in

preprocessing.MinMaxScalerare deprecated and won’t be available from 0.19. Instead, the class now exposes data_min_, data_max_ and data_range_. By Giorgio Patrini.- All Scaler classes now have an scale_ attribute, the feature-wise rescaling applied by their transform methods. The old attribute std_ in

preprocessing.StandardScaleris deprecated and superseded by scale_; it won’t be available in 0.19. By Giorgio Patrini.svm.SVC`andsvm.NuSVCnow have andecision_function_shapeparameter to make their decision function of shape(n_samples, n_classes)by settingdecision_function_shape='ovr'. This will be the default behavior starting in 0.19. By Andreas Müller.- Passing 1D data arrays as input to estimators is now deprecated as it caused confusion in how the array elements should be interpreted as features or as samples. All data arrays are now expected to be explicitly shaped

(n_samples, n_features). By Vighnesh Birodkar.lda.LDAandqda.QDAhave been moved todiscriminant_analysis.LinearDiscriminantAnalysisanddiscriminant_analysis.QuadraticDiscriminantAnalysis.- The

store_covarianceandtolparameters have been moved from the fit method to the constructor indiscriminant_analysis.LinearDiscriminantAnalysisand thestore_covariancesandtolparameters have been moved from the fit method to the constructor indiscriminant_analysis.QuadraticDiscriminantAnalysis.- Models inheriting from

_LearntSelectorMixinwill no longer support the transform methods. (i.e, RandomForests, GradientBoosting, LogisticRegression, DecisionTrees, SVMs and SGD related models). Wrap these models around the metatransfomerfeature_selection.SelectFromModelto remove features (according to coefs_ or feature_importances_) which are below a certain threshold value instead.cluster.KMeansre-runs cluster-assignments in case of non-convergence, to ensure consistency ofpredict(X)andlabels_. By Vighnesh Birodkar.- Classifier and Regressor models are now tagged as such using the

_estimator_typeattribute.- Cross-validation iterators allways provide indices into training and test set, not boolean masks.

- The

decision_functionon all regressors was deprecated and will be removed in 0.19. Usepredictinstead.datasets.load_lfw_pairsis deprecated and will be removed in 0.19. Usedatasets.fetch_lfw_pairsinstead.- The deprecated

hmmmodule was removed.- The deprecated

Bootstrapcross-validation iterator was removed.- The deprecated

WardandWardAgglomerativeclasses have been removed. Useclustering.AgglomerativeClusteringinstead.cross_validation.check_cvis now a public function.- The property

residues_oflinear_model.LinearRegressionis deprecated and will be removed in 0.19.- The deprecated

n_jobsparameter oflinear_model.LinearRegressionhas been moved to the constructor.- Removed deprecated

class_weightparameter fromlinear_model.SGDClassifier‘sfitmethod. Use the construction parameter instead.- The deprecated support for the sequence of sequences (or list of lists) multilabel format was removed. To convert to and from the supported binary indicator matrix format, use

MultiLabelBinarizer.- The behavior of calling the

inverse_transformmethod ofPipeline.pipelinewill change in 0.19. It will no longer reshape one-dimensional input to two-dimensional input.- The deprecated attributes

indicator_matrix_,multilabel_andclasses_ofpreprocessing.LabelBinarizerwere removed.- Using

gamma=0insvm.SVCandsvm.SVRto automatically set the gamma to1. / n_featuresis deprecated and will be removed in 0.19. Usegamma="auto"instead.

Version 0.16.1¶

Changelog¶

Bug fixes¶

- Allow input data larger than

block_sizeincovariance.LedoitWolfby Andreas Müller.- Fix a bug in

isotonic.IsotonicRegressiondeduplication that caused unstable result incalibration.CalibratedClassifierCVby Jan Hendrik Metzen.- Fix sorting of labels in func:preprocessing.label_binarize by Michael Heilman.

- Fix several stability and convergence issues in

cross_decomposition.CCAandcross_decomposition.PLSCanonicalby Andreas Müller- Fix a bug in

cluster.KMeanswhenprecompute_distances=Falseon fortran-ordered data.- Fix a speed regression in

ensemble.RandomForestClassifier‘spredictandpredict_probaby Andreas Müller.- Fix a regression where

utils.shuffleconverted lists and dataframes to arrays, by Olivier Grisel

Version 0.16¶

Highlights¶

- Speed improvements (notably in

cluster.DBSCAN), reduced memory requirements, bug-fixes and better default settings.- Multinomial Logistic regression and a path algorithm in

linear_model.LogisticRegressionCV.- Out-of core learning of PCA via

decomposition.IncrementalPCA.- Probability callibration of classifiers using

calibration.CalibratedClassifierCV.cluster.Birchclustering method for large-scale datasets.- Scalable approximate nearest neighbors search with Locality-sensitive hashing forests in

neighbors.LSHForest.- Improved error messages and better validation when using malformed input data.

- More robust integration with pandas dataframes.

Changelog¶

New features¶

- The new

neighbors.LSHForestimplements locality-sensitive hashing for approximate nearest neighbors search. By Maheshakya Wijewardena.- Added

svm.LinearSVR. This class uses the liblinear implementation of Support Vector Regression which is much faster for large sample sizes thansvm.SVRwith linear kernel. By Fabian Pedregosa and Qiang Luo.- Incremental fit for

GaussianNB.- Added

sample_weightsupport todummy.DummyClassifieranddummy.DummyRegressor. By Arnaud Joly.- Added the

metrics.label_ranking_average_precision_scoremetrics. By Arnaud Joly.- Add the

metrics.coverage_errormetrics. By Arnaud Joly.- Added

linear_model.LogisticRegressionCV. By Manoj Kumar, Fabian Pedregosa, Gael Varoquaux and Alexandre Gramfort.- Added

warm_startconstructor parameter to make it possible for any trained forest model to grow additional trees incrementally. By Laurent Direr.- Added

sample_weightsupport toensemble.GradientBoostingClassifierandensemble.GradientBoostingRegressor. By Peter Prettenhofer.- Added

decomposition.IncrementalPCA, an implementation of the PCA algorithm that supports out-of-core learning with apartial_fitmethod. By Kyle Kastner.- Averaged SGD for

SGDClassifierandSGDRegressorBy Danny Sullivan.- Added

cross_val_predictfunction which computes cross-validated estimates. By Luis Pedro Coelho- Added

linear_model.TheilSenRegressor, a robust generalized-median-based estimator. By Florian Wilhelm.- Added

metrics.median_absolute_error, a robust metric. By Gael Varoquaux and Florian Wilhelm.- Add

cluster.Birch, an online clustering algorithm. By Manoj Kumar, Alexandre Gramfort and Joel Nothman.- Added shrinkage support to

discriminant_analysis.LinearDiscriminantAnalysisusing two new solvers. By Clemens Brunner and Martin Billinger.- Added

kernel_ridge.KernelRidge, an implementation of kernelized ridge regression. By Mathieu Blondel and Jan Hendrik Metzen.- All solvers in

linear_model.Ridgenow support sample_weight. By Mathieu Blondel.- Added

cross_validation.PredefinedSplitcross-validation for fixed user-provided cross-validation folds. By Thomas Unterthiner.- Added

calibration.CalibratedClassifierCV, an approach for calibrating the predicted probabilities of a classifier. By Alexandre Gramfort, Jan Hendrik Metzen, Mathieu Blondel and Balazs Kegl.

Enhancements¶

- Add option

return_distanceinhierarchical.ward_treeto return distances between nodes for both structured and unstructured versions of the algorithm. By Matteo Visconti di Oleggio Castello. The same option was added inhierarchical.linkage_tree. By Manoj Kumar- Add support for sample weights in scorer objects. Metrics with sample weight support will automatically benefit from it. By Noel Dawe and Vlad Niculae.

- Added

newton-cgand lbfgs solver support inlinear_model.LogisticRegression. By Manoj Kumar.- Add

selection="random"parameter to implement stochastic coordinate descent forlinear_model.Lasso,linear_model.ElasticNetand related. By Manoj Kumar.- Add

sample_weightparameter tometrics.jaccard_similarity_scoreandmetrics.log_loss. By Jatin Shah.- Support sparse multilabel indicator representation in

preprocessing.LabelBinarizerandmulticlass.OneVsRestClassifier(by Hamzeh Alsalhi with thanks to Rohit Sivaprasad), as well as evaluation metrics (by Joel Nothman).- Add

sample_weightparameter to metrics.jaccard_similarity_score. By Jatin Shah.- Add support for multiclass in metrics.hinge_loss. Added

labels=Noneas optional paramter. By Saurabh Jha.- Add

sample_weightparameter to metrics.hinge_loss. By Saurabh Jha.- Add

multi_class="multinomial"option inlinear_model.LogisticRegressionto implement a Logistic Regression solver that minimizes the cross-entropy or multinomial loss instead of the default One-vs-Rest setting. Supports lbfgs and newton-cg solvers. By Lars Buitinck and Manoj Kumar. Solver option newton-cg by Simon Wu.DictVectorizercan now performfit_transformon an iterable in a single pass, when giving the optionsort=False. By Dan Blanchard.GridSearchCVandRandomizedSearchCVcan now be configured to work with estimators that may fail and raise errors on individual folds. This option is controlled by the error_score parameter. This does not affect errors raised on re-fit. By Michal Romaniuk.- Add

digitsparameter to metrics.classification_report to allow report to show different precision of floating point numbers. By Ian Gilmore.- Add a quantile prediction strategy to the

dummy.DummyRegressor. By Aaron Staple.- Add

handle_unknownoption topreprocessing.OneHotEncoderto handle unknown categorical features more gracefully during transform. By Manoj Kumar.- Added support for sparse input data to decision trees and their ensembles. By Fares Hedyati and Arnaud Joly.

- Optimized

cluster.AffinityPropagationby reducing the number of memory allocations of large temporary data-structures. By Antony Lee.- Parellization of the computation of feature importances in random forest. By Olivier Grisel and Arnaud Joly.

- Add

n_iter_attribute to estimators that accept amax_iterattribute in their constructor. By Manoj Kumar.- Added decision function for

multiclass.OneVsOneClassifierBy Raghav R V and Kyle Beauchamp.neighbors.kneighbors_graphandradius_neighbors_graphsupport non-Euclidean metrics. By Manoj Kumar- Parameter

connectivityincluster.AgglomerativeClusteringand family now accept callables that return a connectivity matrix. By Manoj Kumar.- Sparse support for

paired_distances. By Joel Nothman.cluster.DBSCANnow supports sparse input and sample weights and has been optimized: the inner loop has been rewritten in Cython and radius neighbors queries are now computed in batch. By Joel Nothman and Lars Buitinck.- Add

class_weightparameter to automatically weight samples by class frequency forensemble.RandomForestClassifier,tree.DecisionTreeClassifier,ensemble.ExtraTreesClassifierandtree.ExtraTreeClassifier. By Trevor Stephens.grid_search.RandomizedSearchCVnow does sampling without replacement if all parameters are given as lists. By Andreas Müller.- Parallelized calculation of

pairwise_distancesis now supported for scipy metrics and custom callables. By Joel Nothman.- Allow the fitting and scoring of all clustering algorithms in

pipeline.Pipeline. By Andreas Müller.- More robust seeding and improved error messages in

cluster.MeanShiftby Andreas Müller.- Make the stopping criterion for

mixture.GMM,mixture.DPGMMandmixture.VBGMMless dependent on the number of samples by thresholding the average log-likelihood change instead of its sum over all samples. By Hervé Bredin.- The outcome of

manifold.spectral_embeddingwas made deterministic by flipping the sign of eigen vectors. By Hasil Sharma.- Significant performance and memory usage improvements in

preprocessing.PolynomialFeatures. By Eric Martin.- Numerical stability improvements for

preprocessing.StandardScalerandpreprocessing.scale. By Nicolas Goixsvm.SVCfitted on sparse input now implementsdecision_function. By Rob Zinkov and Andreas Müller.cross_validation.train_test_splitnow preserves the input type, instead of converting to numpy arrays.

Documentation improvements¶

- Added example of using

FeatureUnionfor heterogeneous input. By Matt Terry- Documentation on scorers was improved, to highlight the handling of loss functions. By Matt Pico.

- A discrepancy between liblinear output and scikit-learn’s wrappers is now noted. By Manoj Kumar.

- Improved documentation generation: examples referring to a class or function are now shown in a gallery on the class/function’s API reference page. By Joel Nothman.

- More explicit documentation of sample generators and of data transformation. By Joel Nothman.

sklearn.neighbors.BallTreeandsklearn.neighbors.KDTreeused to point to empty pages stating that they are aliases of BinaryTree. This has been fixed to show the correct class docs. By Manoj Kumar.- Added silhouette plots for analysis of KMeans clustering using

metrics.silhouette_samplesandmetrics.silhouette_score. See Selecting the number of clusters with silhouette analysis on KMeans clustering

Bug fixes¶

- Metaestimators now support ducktyping for the presence of

decision_function,predict_probaand other methods. This fixes behavior ofgrid_search.GridSearchCV,grid_search.RandomizedSearchCV,pipeline.Pipeline,feature_selection.RFE,feature_selection.RFECVwhen nested. By Joel Nothman- The

scoringattribute of grid-search and cross-validation methods is no longer ignored when agrid_search.GridSearchCVis given as a base estimator or the base estimator doesn’t have predict.- The function

hierarchical.ward_treenow returns the children in the same order for both the structured and unstructured versions. By Matteo Visconti di Oleggio Castello.feature_selection.RFECVnow correctly handles cases whenstepis not equal to 1. By Nikolay Mayorov- The

decomposition.PCAnow undoes whitening in itsinverse_transform. Also, itscomponents_now always have unit length. By Michael Eickenberg.- Fix incomplete download of the dataset when

datasets.download_20newsgroupsis called. By Manoj Kumar.- Various fixes to the Gaussian processes subpackage by Vincent Dubourg and Jan Hendrik Metzen.

- Calling

partial_fitwithclass_weight=='auto'throws an appropriate error message and suggests a work around. By Danny Sullivan.RBFSamplerwithgamma=gformerly approximatedrbf_kernelwithgamma=g/2.; the definition ofgammais now consistent, which may substantially change your results if you use a fixed value. (If you cross-validated overgamma, it probably doesn’t matter too much.) By Dougal Sutherland.- Pipeline object delegate the

classes_attribute to the underlying estimator. It allows for instance to make bagging of a pipeline object. By Arnaud Jolyneighbors.NearestCentroidnow uses the median as the centroid when metric is set tomanhattan. It was using the mean before. By Manoj Kumar- Fix numerical stability issues in

linear_model.SGDClassifierandlinear_model.SGDRegressorby clipping large gradients and ensuring that weight decay rescaling is always positive (for large l2 regularization and large learning rate values). By Olivier Grisel- When compute_full_tree is set to “auto”, the full tree is built when n_clusters is high and is early stopped when n_clusters is low, while the behavior should be vice-versa in

cluster.AgglomerativeClustering(and friends). This has been fixed By Manoj Kumar- Fix lazy centering of data in

linear_model.enet_pathandlinear_model.lasso_path. It was centered around one. It has been changed to be centered around the origin. By Manoj Kumar- Fix handling of precomputed affinity matrices in

cluster.AgglomerativeClusteringwhen using connectivity constraints. By Cathy Deng- Correct

partial_fithandling ofclass_priorforsklearn.naive_bayes.MultinomialNBandsklearn.naive_bayes.BernoulliNB. By Trevor Stephens.- Fixed a crash in

metrics.precision_recall_fscore_supportwhen using unsortedlabelsin the multi-label setting. By Andreas Müller.- Avoid skipping the first nearest neighbor in the methods

radius_neighbors,kneighbors,kneighbors_graphandradius_neighbors_graphinsklearn.neighbors.NearestNeighborsand family, when the query data is not the same as fit data. By Manoj Kumar.- Fix log-density calculation in the

mixture.GMMwith tied covariance. By Will Dawson- Fixed a scaling error in

feature_selection.SelectFdrwhere a factorn_featureswas missing. By Andrew Tulloch- Fix zero division in

neighbors.KNeighborsRegressorand related classes when using distance weighting and having identical data points. By Garret-R.- Fixed round off errors with non positive-definite covariance matrices in GMM. By Alexis Mignon.

- Fixed a error in the computation of conditional probabilities in

naive_bayes.BernoulliNB. By Hanna Wallach.- Make the method

radius_neighborsofneighbors.NearestNeighborsreturn the samples lying on the boundary foralgorithm='brute'. By Yan Yi.- Flip sign of

dual_coef_ofsvm.SVCto make it consistent with the documentation anddecision_function. By Artem Sobolev.- Fixed handling of ties in

isotonic.IsotonicRegression. We now use the weighted average of targets (secondary method). By Andreas Müller and Michael Bommarito.

API changes summary¶

GridSearchCVandcross_val_scoreand other meta-estimators don’t convert pandas DataFrames into arrays any more, allowing DataFrame specific operations in custom estimators.

multiclass.fit_ovr,multiclass.predict_ovr,predict_proba_ovr,multiclass.fit_ovo,multiclass.predict_ovo,multiclass.fit_ecocandmulticlass.predict_ecocare deprecated. Use the underlying estimators instead.Nearest neighbors estimators used to take arbitrary keyword arguments and pass these to their distance metric. This will no longer be supported in scikit-learn 0.18; use the

metric_paramsargument instead.

- n_jobs parameter of the fit method shifted to the constructor of the

LinearRegression class.

The

predict_probamethod ofmulticlass.OneVsRestClassifiernow returns two probabilities per sample in the multiclass case; this is consistent with other estimators and with the method’s documentation, but previous versions accidentally returned only the positive probability. Fixed by Will Lamond and Lars Buitinck.Change default value of precompute in

ElasticNetandLassoto False. Setting precompute to “auto” was found to be slower when n_samples > n_features since the computation of the Gram matrix is computationally expensive and outweighs the benefit of fitting the Gram for just one alpha.precompute="auto"is now deprecated and will be removed in 0.18 By Manoj Kumar.Expose

positiveoption inlinear_model.enet_pathandlinear_model.enet_pathwhich constrains coefficients to be positive. By Manoj Kumar.Users should now supply an explicit

averageparameter tosklearn.metrics.f1_score,sklearn.metrics.fbeta_score,sklearn.metrics.recall_scoreandsklearn.metrics.precision_scorewhen performing multiclass or multilabel (i.e. not binary) classification. By Joel Nothman.scoring parameter for cross validation now accepts ‘f1_micro’, ‘f1_macro’ or ‘f1_weighted’. ‘f1’ is now for binary classification only. Similar changes apply to ‘precision’ and ‘recall’. By Joel Nothman.

The

fit_intercept,normalizeandreturn_modelsparameters inlinear_model.enet_pathandlinear_model.lasso_pathhave been removed. They were deprecated since 0.14From now onwards, all estimators will uniformly raise

NotFittedError(utils.validation.NotFittedError), when any of thepredictlike methods are called before the model is fit. By Raghav R V.Input data validation was refactored for more consistent input validation. The

check_arraysfunction was replaced bycheck_arrayandcheck_X_y. By Andreas Müller.Allow

X=Nonein the methodsradius_neighbors,kneighbors,kneighbors_graphandradius_neighbors_graphinsklearn.neighbors.NearestNeighborsand family. If set to None, then for every sample this avoids setting the sample itself as the first nearest neighbor. By Manoj Kumar.Add parameter

include_selfinneighbors.kneighbors_graphandneighbors.radius_neighbors_graphwhich has to be explicitly set by the user. If set to True, then the sample itself is considered as the first nearest neighbor.thresh parameter is deprecated in favor of new tol parameter in

GMM,DPGMMandVBGMM. See Enhancements section for details. By Hervé Bredin.Estimators will treat input with dtype object as numeric when possible. By Andreas Müller

Estimators now raise ValueError consistently when fitted on empty data (less than 1 sample or less than 1 feature for 2D input). By Olivier Grisel.

The

shuffleoption oflinear_model.SGDClassifier,linear_model.SGDRegressor,linear_model.Perceptron,linear_model.PassiveAgressiveClassifierandlinear_model.PassiveAgressiveRegressornow defaults toTrue.

cluster.DBSCANnow uses a deterministic initialization. The random_state parameter is deprecated. By Erich Schubert.

Version 0.15.2¶

Bug fixes¶

- Fixed handling of the

pparameter of the Minkowski distance that was previously ignored in nearest neighbors models. By Nikolay Mayorov.- Fixed duplicated alphas in

linear_model.LassoLarswith early stopping on 32 bit Python. By Olivier Grisel and Fabian Pedregosa.- Fixed the build under Windows when scikit-learn is built with MSVC while NumPy is built with MinGW. By Olivier Grisel and Federico Vaggi.

- Fixed an array index overflow bug in the coordinate descent solver. By Gael Varoquaux.

- Better handling of numpy 1.9 deprecation warnings. By Gael Varoquaux.

- Removed unnecessary data copy in

cluster.KMeans. By Gael Varoquaux.- Explicitly close open files to avoid

ResourceWarningsunder Python 3. By Calvin Giles.- The

transformofdiscriminant_analysis.LinearDiscriminantAnalysisnow projects the input on the most discriminant directions. By Martin Billinger.- Fixed potential overflow in

_tree.safe_reallocby Lars Buitinck.- Performance optimization in

isotonic.IsotonicRegression. By Robert Bradshaw.noseis non-longer a runtime dependency to importsklearn, only for running the tests. By Joel Nothman.- Many documentation and website fixes by Joel Nothman, Lars Buitinck Matt Pico, and others.

Version 0.15.1¶

Bug fixes¶

- Made

cross_validation.cross_val_scoreusecross_validation.KFoldinstead ofcross_validation.StratifiedKFoldon multi-output classification problems. By Nikolay Mayorov.- Support unseen labels

preprocessing.LabelBinarizerto restore the default behavior of 0.14.1 for backward compatibility. By Hamzeh Alsalhi.- Fixed the

cluster.KMeansstopping criterion that prevented early convergence detection. By Edward Raff and Gael Varoquaux.- Fixed the behavior of

multiclass.OneVsOneClassifier. in case of ties at the per-class vote level by computing the correct per-class sum of prediction scores. By Andreas Müller.- Made

cross_validation.cross_val_scoreandgrid_search.GridSearchCVaccept Python lists as input data. This is especially useful for cross-validation and model selection of text processing pipelines. By Andreas Müller.- Fixed data input checks of most estimators to accept input data that implements the NumPy

__array__protocol. This is the case for forpandas.Seriesandpandas.DataFramein recent versions of pandas. By Gael Varoquaux.- Fixed a regression for

linear_model.SGDClassifierwithclass_weight="auto"on data with non-contiguous labels. By Olivier Grisel.

Version 0.15¶

Highlights¶

- Many speed and memory improvements all across the code

- Huge speed and memory improvements to random forests (and extra trees) that also benefit better from parallel computing.

- Incremental fit to

BernoulliRBM- Added

cluster.AgglomerativeClusteringfor hierarchical agglomerative clustering with average linkage, complete linkage and ward strategies.- Added

linear_model.RANSACRegressorfor robust regression models.- Added dimensionality reduction with

manifold.TSNEwhich can be used to visualize high-dimensional data.

Changelog¶

New features¶

- Added

ensemble.BaggingClassifierandensemble.BaggingRegressormeta-estimators for ensembling any kind of base estimator. See the Bagging section of the user guide for details and examples. By Gilles Louppe.- New unsupervised feature selection algorithm

feature_selection.VarianceThreshold, by Lars Buitinck.- Added

linear_model.RANSACRegressormeta-estimator for the robust fitting of regression models. By Johannes Schönberger.- Added

cluster.AgglomerativeClusteringfor hierarchical agglomerative clustering with average linkage, complete linkage and ward strategies, by Nelle Varoquaux and Gael Varoquaux.- Shorthand constructors

pipeline.make_pipelineandpipeline.make_unionwere added by Lars Buitinck.- Shuffle option for

cross_validation.StratifiedKFold. By Jeffrey Blackburne.- Incremental learning (

partial_fit) for Gaussian Naive Bayes by Imran Haque.- Added

partial_fittoBernoulliRBMBy Danny Sullivan.- Added

learning_curveutility to chart performance with respect to training size. See Plotting Learning Curves. By Alexander Fabisch.- Add positive option in

LassoCVandElasticNetCV. By Brian Wignall and Alexandre Gramfort.- Added

linear_model.MultiTaskElasticNetCVandlinear_model.MultiTaskLassoCV. By Manoj Kumar.- Added

manifold.TSNE. By Alexander Fabisch.

Enhancements¶

- Add sparse input support to

ensemble.AdaBoostClassifierandensemble.AdaBoostRegressormeta-estimators. By Hamzeh Alsalhi.- Memory improvements of decision trees, by Arnaud Joly.

- Decision trees can now be built in best-first manner by using

max_leaf_nodesas the stopping criteria. Refactored the tree code to use either a stack or a priority queue for tree building. By Peter Prettenhofer and Gilles Louppe.- Decision trees can now be fitted on fortran- and c-style arrays, and non-continuous arrays without the need to make a copy. If the input array has a different dtype than

np.float32, a fortran- style copy will be made since fortran-style memory layout has speed advantages. By Peter Prettenhofer and Gilles Louppe.- Speed improvement of regression trees by optimizing the the computation of the mean square error criterion. This lead to speed improvement of the tree, forest and gradient boosting tree modules. By Arnaud Joly

- The

img_to_graphandgrid_tographfunctions insklearn.feature_extraction.imagenow returnnp.ndarrayinstead ofnp.matrixwhenreturn_as=np.ndarray. See the Notes section for more information on compatibility.- Changed the internal storage of decision trees to use a struct array. This fixed some small bugs, while improving code and providing a small speed gain. By Joel Nothman.

- Reduce memory usage and overhead when fitting and predicting with forests of randomized trees in parallel with

n_jobs != 1by leveraging new threading backend of joblib 0.8 and releasing the GIL in the tree fitting Cython code. By Olivier Grisel and Gilles Louppe.- Speed improvement of the

sklearn.ensemble.gradient_boostingmodule. By Gilles Louppe and Peter Prettenhofer.- Various enhancements to the

sklearn.ensemble.gradient_boostingmodule: awarm_startargument to fit additional trees, amax_leaf_nodesargument to fit GBM style trees, amonitorfit argument to inspect the estimator during training, and refactoring of the verbose code. By Peter Prettenhofer.- Faster

sklearn.ensemble.ExtraTreesby caching feature values. By Arnaud Joly.- Faster depth-based tree building algorithm such as decision tree, random forest, extra trees or gradient tree boosting (with depth based growing strategy) by avoiding trying to split on found constant features in the sample subset. By Arnaud Joly.

- Add

min_weight_fraction_leafpre-pruning parameter to tree-based methods: the minimum weighted fraction of the input samples required to be at a leaf node. By Noel Dawe.- Added

metrics.pairwise_distances_argmin_min, by Philippe Gervais.- Added predict method to

cluster.AffinityPropagationandcluster.MeanShift, by Mathieu Blondel.- Vector and matrix multiplications have been optimised throughout the library by Denis Engemann, and Alexandre Gramfort. In particular, they should take less memory with older NumPy versions (prior to 1.7.2).

- Precision-recall and ROC examples now use train_test_split, and have more explanation of why these metrics are useful. By Kyle Kastner

- The training algorithm for

decomposition.NMFis faster for sparse matrices and has much lower memory complexity, meaning it will scale up gracefully to large datasets. By Lars Buitinck.- Added svd_method option with default value to “randomized” to

decomposition.FactorAnalysisto save memory and significantly speedup computation by Denis Engemann, and Alexandre Gramfort.- Changed

cross_validation.StratifiedKFoldto try and preserve as much of the original ordering of samples as possible so as not to hide overfitting on datasets with a non-negligible level of samples dependency. By Daniel Nouri and Olivier Grisel.- Add multi-output support to

gaussian_process.GaussianProcessby John Novak.- Support for precomputed distance matrices in nearest neighbor estimators by Robert Layton and Joel Nothman.

- Norm computations optimized for NumPy 1.6 and later versions by Lars Buitinck. In particular, the k-means algorithm no longer needs a temporary data structure the size of its input.

dummy.DummyClassifiercan now be used to predict a constant output value. By Manoj Kumar.dummy.DummyRegressorhas now a strategy parameter which allows to predict the mean, the median of the training set or a constant output value. By Maheshakya Wijewardena.- Multi-label classification output in multilabel indicator format is now supported by

metrics.roc_auc_scoreandmetrics.average_precision_scoreby Arnaud Joly.- Significant performance improvements (more than 100x speedup for large problems) in

isotonic.IsotonicRegressionby Andrew Tulloch.- Speed and memory usage improvements to the SGD algorithm for linear models: it now uses threads, not separate processes, when

n_jobs>1. By Lars Buitinck.- Grid search and cross validation allow NaNs in the input arrays so that preprocessors such as

preprocessing.Imputercan be trained within the cross validation loop, avoiding potentially skewed results.- Ridge regression can now deal with sample weights in feature space (only sample space until then). By Michael Eickenberg. Both solutions are provided by the Cholesky solver.

- Several classification and regression metrics now support weighted samples with the new

sample_weightargument:metrics.accuracy_score,metrics.zero_one_loss,metrics.precision_score,metrics.average_precision_score,metrics.f1_score,metrics.fbeta_score,metrics.recall_score,metrics.roc_auc_score,metrics.explained_variance_score,metrics.mean_squared_error,metrics.mean_absolute_error,metrics.r2_score. By Noel Dawe.- Speed up of the sample generator

datasets.make_multilabel_classification. By Joel Nothman.

Documentation improvements¶

- The Working With Text Data tutorial has now been worked in to the main documentation’s tutorial section. Includes exercises and skeletons for tutorial presentation. Original tutorial created by several authors including Olivier Grisel, Lars Buitinck and many others. Tutorial integration into the scikit-learn documentation by Jaques Grobler

- Added Computational Performance documentation. Discussion and examples of prediction latency / throughput and different factors that have influence over speed. Additional tips for building faster models and choosing a relevant compromise between speed and predictive power. By Eustache Diemert.

Bug fixes¶

- Fixed bug in

decomposition.MiniBatchDictionaryLearning:partial_fitwas not working properly.- Fixed bug in

linear_model.stochastic_gradient:l1_ratiowas used as(1.0 - l1_ratio).- Fixed bug in

multiclass.OneVsOneClassifierwith string labels- Fixed a bug in

LassoCVandElasticNetCV: they would not pre-compute the Gram matrix withprecompute=Trueorprecompute="auto"andn_samples > n_features. By Manoj Kumar.- Fixed incorrect estimation of the degrees of freedom in

feature_selection.f_regressionwhen variates are not centered. By Virgile Fritsch.- Fixed a race condition in parallel processing with

pre_dispatch != "all"(for instance incross_val_score). By Olivier Grisel.- Raise error in

cluster.FeatureAgglomerationandcluster.WardAgglomerationwhen no samples are given, rather than returning meaningless clustering.- Fixed bug in

gradient_boosting.GradientBoostingRegressorwithloss='huber':gammamight have not been initialized.- Fixed feature importances as computed with a forest of randomized trees when fit with

sample_weight != Noneand/or withbootstrap=True. By Gilles Louppe.

API changes summary¶

sklearn.hmmis deprecated. Its removal is planned for the 0.17 release.- Use of

covariance.EllipticEnvelophas now been removed after deprecation. Please usecovariance.EllipticEnvelopeinstead.cluster.Wardis deprecated. Usecluster.AgglomerativeClusteringinstead.cluster.WardClusteringis deprecated. Usecluster.AgglomerativeClusteringinstead.cross_validation.Bootstrapis deprecated.cross_validation.KFoldorcross_validation.ShuffleSplitare recommended instead.- Direct support for the sequence of sequences (or list of lists) multilabel format is deprecated. To convert to and from the supported binary indicator matrix format, use

MultiLabelBinarizer. By Joel Nothman.- Add score method to

PCAfollowing the model of probabilistic PCA and deprecateProbabilisticPCAmodel whose score implementation is not correct. The computation now also exploits the matrix inversion lemma for faster computation. By Alexandre Gramfort.- The score method of

FactorAnalysisnow returns the average log-likelihood of the samples. Use score_samples to get log-likelihood of each sample. By Alexandre Gramfort.- Generating boolean masks (the setting

indices=False) from cross-validation generators is deprecated. Support for masks will be removed in 0.17. The generators have produced arrays of indices by default since 0.10. By Joel Nothman.- 1-d arrays containing strings with

dtype=object(as used in Pandas) are now considered valid classification targets. This fixes a regression from version 0.13 in some classifiers. By Joel Nothman.- Fix wrong

explained_variance_ratio_attribute inRandomizedPCA. By Alexandre Gramfort.- Fit alphas for each

l1_ratioinstead ofmean_l1_ratioinlinear_model.ElasticNetCVandlinear_model.LassoCV. This changes the shape ofalphas_from(n_alphas,)to(n_l1_ratio, n_alphas)if thel1_ratioprovided is a 1-D array like object of length greater than one. By Manoj Kumar.- Fix

linear_model.ElasticNetCVandlinear_model.LassoCVwhen fitting intercept and input data is sparse. The automatic grid of alphas was not computed correctly and the scaling with normalize was wrong. By Manoj Kumar.- Fix wrong maximal number of features drawn (

max_features) at each split for decision trees, random forests and gradient tree boosting. Previously, the count for the number of drawn features started only after one non constant features in the split. This bug fix will affect computational and generalization performance of those algorithms in the presence of constant features. To get back previous generalization performance, you should modify the value ofmax_features. By Arnaud Joly.- Fix wrong maximal number of features drawn (

max_features) at each split forensemble.ExtraTreesClassifierandensemble.ExtraTreesRegressor. Previously, only non constant features in the split was counted as drawn. Now constant features are counted as drawn. Furthermore at least one feature must be non constant in order to make a valid split. This bug fix will affect computational and generalization performance of extra trees in the presence of constant features. To get back previous generalization performance, you should modify the value ofmax_features. By Arnaud Joly.- Fix

utils.compute_class_weightwhenclass_weight=="auto". Previously it was broken for input of non-integerdtypeand the weighted array that was returned was wrong. By Manoj Kumar.- Fix

cross_validation.Bootstrapto returnValueErrorwhenn_train + n_test > n. By Ronald Phlypo.

People¶

List of contributors for release 0.15 by number of commits.

- 312 Olivier Grisel

- 275 Lars Buitinck

- 221 Gael Varoquaux

- 148 Arnaud Joly

- 134 Johannes Schönberger

- 119 Gilles Louppe

- 113 Joel Nothman

- 111 Alexandre Gramfort

- 95 Jaques Grobler

- 89 Denis Engemann

- 83 Peter Prettenhofer

- 83 Alexander Fabisch

- 62 Mathieu Blondel

- 60 Eustache Diemert

- 60 Nelle Varoquaux

- 49 Michael Bommarito

- 45 Manoj-Kumar-S

- 28 Kyle Kastner

- 26 Andreas Mueller

- 22 Noel Dawe

- 21 Maheshakya Wijewardena

- 21 Brooke Osborn

- 21 Hamzeh Alsalhi

- 21 Jake VanderPlas

- 21 Philippe Gervais

- 19 Bala Subrahmanyam Varanasi

- 12 Ronald Phlypo

- 10 Mikhail Korobov

- 8 Thomas Unterthiner

- 8 Jeffrey Blackburne

- 8 eltermann

- 8 bwignall

- 7 Ankit Agrawal

- 7 CJ Carey

- 6 Daniel Nouri

- 6 Chen Liu

- 6 Michael Eickenberg

- 6 ugurthemaster

- 5 Aaron Schumacher

- 5 Baptiste Lagarde

- 5 Rajat Khanduja

- 5 Robert McGibbon

- 5 Sergio Pascual

- 4 Alexis Metaireau

- 4 Ignacio Rossi

- 4 Virgile Fritsch

- 4 Sebastian Saeger

- 4 Ilambharathi Kanniah

- 4 sdenton4

- 4 Robert Layton

- 4 Alyssa

- 4 Amos Waterland

- 3 Andrew Tulloch

- 3 murad

- 3 Steven Maude

- 3 Karol Pysniak

- 3 Jacques Kvam

- 3 cgohlke

- 3 cjlin

- 3 Michael Becker

- 3 hamzeh

- 3 Eric Jacobsen

- 3 john collins

- 3 kaushik94

- 3 Erwin Marsi

- 2 csytracy

- 2 LK

- 2 Vlad Niculae

- 2 Laurent Direr

- 2 Erik Shilts

- 2 Raul Garreta

- 2 Yoshiki Vázquez Baeza

- 2 Yung Siang Liau

- 2 abhishek thakur

- 2 James Yu

- 2 Rohit Sivaprasad

- 2 Roland Szabo

- 2 amormachine

- 2 Alexis Mignon

- 2 Oscar Carlsson

- 2 Nantas Nardelli

- 2 jess010

- 2 kowalski87

- 2 Andrew Clegg

- 2 Federico Vaggi

- 2 Simon Frid

- 2 Félix-Antoine Fortin

- 1 Ralf Gommers

- 1 t-aft

- 1 Ronan Amicel

- 1 Rupesh Kumar Srivastava

- 1 Ryan Wang

- 1 Samuel Charron

- 1 Samuel St-Jean

- 1 Fabian Pedregosa

- 1 Skipper Seabold

- 1 Stefan Walk

- 1 Stefan van der Walt

- 1 Stephan Hoyer

- 1 Allen Riddell

- 1 Valentin Haenel

- 1 Vijay Ramesh

- 1 Will Myers

- 1 Yaroslav Halchenko

- 1 Yoni Ben-Meshulam

- 1 Yury V. Zaytsev

- 1 adrinjalali

- 1 ai8rahim

- 1 alemagnani

- 1 alex

- 1 benjamin wilson

- 1 chalmerlowe

- 1 dzikie drożdże

- 1 jamestwebber

- 1 matrixorz

- 1 popo

- 1 samuela

- 1 François Boulogne

- 1 Alexander Measure

- 1 Ethan White

- 1 Guilherme Trein

- 1 Hendrik Heuer

- 1 IvicaJovic

- 1 Jan Hendrik Metzen

- 1 Jean Michel Rouly

- 1 Eduardo Ariño de la Rubia

- 1 Jelle Zijlstra

- 1 Eddy L O Jansson

- 1 Denis

- 1 John

- 1 John Schmidt

- 1 Jorge Cañardo Alastuey

- 1 Joseph Perla

- 1 Joshua Vredevoogd

- 1 José Ricardo

- 1 Julien Miotte

- 1 Kemal Eren

- 1 Kenta Sato

- 1 David Cournapeau

- 1 Kyle Kelley

- 1 Daniele Medri

- 1 Laurent Luce

- 1 Laurent Pierron

- 1 Luis Pedro Coelho

- 1 DanielWeitzenfeld

- 1 Craig Thompson

- 1 Chyi-Kwei Yau

- 1 Matthew Brett

- 1 Matthias Feurer

- 1 Max Linke

- 1 Chris Filo Gorgolewski

- 1 Charles Earl

- 1 Michael Hanke

- 1 Michele Orrù

- 1 Bryan Lunt

- 1 Brian Kearns

- 1 Paul Butler

- 1 Paweł Mandera

- 1 Peter

- 1 Andrew Ash

- 1 Pietro Zambelli

- 1 staubda

Version 0.14¶

Changelog¶

- Missing values with sparse and dense matrices can be imputed with the transformer

preprocessing.Imputerby Nicolas Trésegnie.- The core implementation of decisions trees has been rewritten from scratch, allowing for faster tree induction and lower memory consumption in all tree-based estimators. By Gilles Louppe.

- Added

ensemble.AdaBoostClassifierandensemble.AdaBoostRegressor, by Noel Dawe and Gilles Louppe. See the AdaBoost section of the user guide for details and examples.- Added

grid_search.RandomizedSearchCVandgrid_search.ParameterSamplerfor randomized hyperparameter optimization. By Andreas Müller.- Added biclustering algorithms (

sklearn.cluster.bicluster.SpectralCoclusteringandsklearn.cluster.bicluster.SpectralBiclustering), data generation methods (sklearn.datasets.make_biclustersandsklearn.datasets.make_checkerboard), and scoring metrics (sklearn.metrics.consensus_score). By Kemal Eren.- Added Restricted Boltzmann Machines (

neural_network.BernoulliRBM). By Yann Dauphin.- Python 3 support by Justin Vincent, Lars Buitinck, Subhodeep Moitra and Olivier Grisel. All tests now pass under Python 3.3.

- Ability to pass one penalty (alpha value) per target in

linear_model.Ridge, by @eickenberg and Mathieu Blondel.- Fixed

sklearn.linear_model.stochastic_gradient.pyL2 regularization issue (minor practical significance). By Norbert Crombach and Mathieu Blondel .- Added an interactive version of Andreas Müller‘s Machine Learning Cheat Sheet (for scikit-learn) to the documentation. See Choosing the right estimator. By Jaques Grobler.

grid_search.GridSearchCVandcross_validation.cross_val_scorenow support the use of advanced scoring function such as area under the ROC curve and f-beta scores. See The scoring parameter: defining model evaluation rules for details. By Andreas Müller and Lars Buitinck. Passing a function fromsklearn.metricsasscore_funcis deprecated.- Multi-label classification output is now supported by

metrics.accuracy_score,metrics.zero_one_loss,metrics.f1_score,metrics.fbeta_score,metrics.classification_report,metrics.precision_scoreandmetrics.recall_scoreby Arnaud Joly.- Two new metrics

metrics.hamming_lossandmetrics.jaccard_similarity_scoreare added with multi-label support by Arnaud Joly.- Speed and memory usage improvements in

feature_extraction.text.CountVectorizerandfeature_extraction.text.TfidfVectorizer, by Jochen Wersdörfer and Roman Sinayev.- The

min_dfparameter infeature_extraction.text.CountVectorizerandfeature_extraction.text.TfidfVectorizer, which used to be 2, has been reset to 1 to avoid unpleasant surprises (empty vocabularies) for novice users who try it out on tiny document collections. A value of at least 2 is still recommended for practical use.svm.LinearSVC,linear_model.SGDClassifierandlinear_model.SGDRegressornow have asparsifymethod that converts theircoef_into a sparse matrix, meaning stored models trained using these estimators can be made much more compact.linear_model.SGDClassifiernow produces multiclass probability estimates when trained under log loss or modified Huber loss.- Hyperlinks to documentation in example code on the website by Martin Luessi.

- Fixed bug in

preprocessing.MinMaxScalercausing incorrect scaling of the features for non-defaultfeature_rangesettings. By Andreas Müller.max_featuresintree.DecisionTreeClassifier,tree.DecisionTreeRegressorand all derived ensemble estimators now supports percentage values. By Gilles Louppe.- Performance improvements in

isotonic.IsotonicRegressionby Nelle Varoquaux.metrics.accuracy_scorehas an option normalize to return the fraction or the number of correctly classified sample by Arnaud Joly.- Added

metrics.log_lossthat computes log loss, aka cross-entropy loss. By Jochen Wersdörfer and Lars Buitinck.- A bug that caused

ensemble.AdaBoostClassifier‘s to output incorrect probabilities has been fixed.- Feature selectors now share a mixin providing consistent

transform,inverse_transformandget_supportmethods. By Joel Nothman.- A fitted

grid_search.GridSearchCVorgrid_search.RandomizedSearchCVcan now generally be pickled. By Joel Nothman.- Refactored and vectorized implementation of

metrics.roc_curveandmetrics.precision_recall_curve. By Joel Nothman.- The new estimator

sklearn.decomposition.TruncatedSVDperforms dimensionality reduction using SVD on sparse matrices, and can be used for latent semantic analysis (LSA). By Lars Buitinck.- Added self-contained example of out-of-core learning on text data Out-of-core classification of text documents. By Eustache Diemert.

- The default number of components for

sklearn.decomposition.RandomizedPCAis now correctly documented to ben_features. This was the default behavior, so programs using it will continue to work as they did.sklearn.cluster.KMeansnow fits several orders of magnitude faster on sparse data (the speedup depends on the sparsity). By Lars Buitinck.- Reduce memory footprint of FastICA by Denis Engemann and Alexandre Gramfort.

- Verbose output in

sklearn.ensemble.gradient_boostingnow uses a column format and prints progress in decreasing frequency. It also shows the remaining time. By Peter Prettenhofer.sklearn.ensemble.gradient_boostingprovides out-of-bag improvementoob_improvement_rather than the OOB score for model selection. An example that shows how to use OOB estimates to select the number of trees was added. By Peter Prettenhofer.- Most metrics now support string labels for multiclass classification by Arnaud Joly and Lars Buitinck.

- New OrthogonalMatchingPursuitCV class by Alexandre Gramfort and Vlad Niculae.

- Fixed a bug in

sklearn.covariance.GraphLassoCV: the ‘alphas’ parameter now works as expected when given a list of values. By Philippe Gervais.- Fixed an important bug in

sklearn.covariance.GraphLassoCVthat prevented all folds provided by a CV object to be used (only the first 3 were used). When providing a CV object, execution time may thus increase significantly compared to the previous version (bug results are correct now). By Philippe Gervais.cross_validation.cross_val_scoreand thegrid_searchmodule is now tested with multi-output data by Arnaud Joly.datasets.make_multilabel_classificationcan now return the output in label indicator multilabel format by Arnaud Joly.- K-nearest neighbors,

neighbors.KNeighborsRegressorandneighbors.RadiusNeighborsRegressor, and radius neighbors,neighbors.RadiusNeighborsRegressorandneighbors.RadiusNeighborsClassifiersupport multioutput data by Arnaud Joly.- Random state in LibSVM-based estimators (

svm.SVC,NuSVC,OneClassSVM,svm.SVR,svm.NuSVR) can now be controlled. This is useful to ensure consistency in the probability estimates for the classifiers trained withprobability=True. By Vlad Niculae.- Out-of-core learning support for discrete naive Bayes classifiers

sklearn.naive_bayes.MultinomialNBandsklearn.naive_bayes.BernoulliNBby adding thepartial_fitmethod by Olivier Grisel.- New website design and navigation by Gilles Louppe, Nelle Varoquaux, Vincent Michel and Andreas Müller.

- Improved documentation on multi-class, multi-label and multi-output classification by Yannick Schwartz and Arnaud Joly.

- Better input and error handling in the

metricsmodule by Arnaud Joly and Joel Nothman.- Speed optimization of the

hmmmodule by Mikhail Korobov- Significant speed improvements for

sklearn.cluster.DBSCANby cleverless

API changes summary¶

- The

auc_scorewas renamedroc_auc_score.- Testing scikit-learn with

sklearn.test()is deprecated. Usenosetests sklearnfrom the command line.- Feature importances in

tree.DecisionTreeClassifier,tree.DecisionTreeRegressorand all derived ensemble estimators are now computed on the fly when accessing thefeature_importances_attribute. Settingcompute_importances=Trueis no longer required. By Gilles Louppe.linear_model.lasso_pathandlinear_model.enet_pathcan return its results in the same format as that oflinear_model.lars_path. This is done by setting thereturn_modelsparameter toFalse. By Jaques Grobler and Alexandre Gramfortgrid_search.IterGridwas renamed togrid_search.ParameterGrid.- Fixed bug in

KFoldcausing imperfect class balance in some cases. By Alexandre Gramfort and Tadej Janež.sklearn.neighbors.BallTreehas been refactored, and asklearn.neighbors.KDTreehas been added which shares the same interface. The Ball Tree now works with a wide variety of distance metrics. Both classes have many new methods, including single-tree and dual-tree queries, breadth-first and depth-first searching, and more advanced queries such as kernel density estimation and 2-point correlation functions. By Jake Vanderplas- Support for scipy.spatial.cKDTree within neighbors queries has been removed, and the functionality replaced with the new

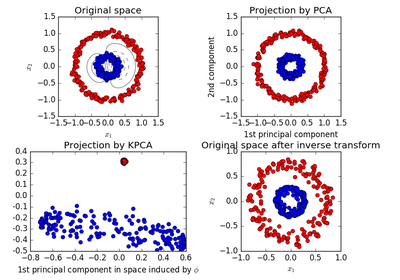

KDTreeclass.sklearn.neighbors.KernelDensityhas been added, which performs efficient kernel density estimation with a variety of kernels.sklearn.decomposition.KernelPCAnow always returns output withn_componentscomponents, unless the new parameterremove_zero_eigis set toTrue. This new behavior is consistent with the way kernel PCA was always documented; previously, the removal of components with zero eigenvalues was tacitly performed on all data.gcv_mode="auto"no longer tries to perform SVD on a densified sparse matrix insklearn.linear_model.RidgeCV.- Sparse matrix support in

sklearn.decomposition.RandomizedPCAis now deprecated in favor of the newTruncatedSVD.cross_validation.KFoldandcross_validation.StratifiedKFoldnow enforce n_folds >= 2 otherwise aValueErroris raised. By Olivier Grisel.datasets.load_files‘scharsetandcharset_errorsparameters were renamedencodinganddecode_errors.- Attribute

oob_score_insklearn.ensemble.GradientBoostingRegressorandsklearn.ensemble.GradientBoostingClassifieris deprecated and has been replaced byoob_improvement_.- Attributes in OrthogonalMatchingPursuit have been deprecated (copy_X, Gram, ...) and precompute_gram renamed precompute for consistency. See #2224.

sklearn.preprocessing.StandardScalernow converts integer input to float, and raises a warning. Previously it rounded for dense integer input.sklearn.multiclass.OneVsRestClassifiernow has adecision_functionmethod. This will return the distance of each sample from the decision boundary for each class, as long as the underlying estimators implement thedecision_functionmethod. By Kyle Kastner.- Better input validation, warning on unexpected shapes for y.

People¶

List of contributors for release 0.14 by number of commits.

- 277 Gilles Louppe

- 245 Lars Buitinck

- 187 Andreas Mueller

- 124 Arnaud Joly

- 112 Jaques Grobler

- 109 Gael Varoquaux

- 107 Olivier Grisel

- 102 Noel Dawe

- 99 Kemal Eren

- 79 Joel Nothman

- 75 Jake VanderPlas

- 73 Nelle Varoquaux

- 71 Vlad Niculae

- 65 Peter Prettenhofer

- 64 Alexandre Gramfort

- 54 Mathieu Blondel

- 38 Nicolas Trésegnie

- 35 eustache

- 27 Denis Engemann

- 25 Yann N. Dauphin

- 19 Justin Vincent

- 17 Robert Layton

- 15 Doug Coleman

- 14 Michael Eickenberg

- 13 Robert Marchman

- 11 Fabian Pedregosa

- 11 Philippe Gervais

- 10 Jim Holmström

- 10 Tadej Janež

- 10 syhw

- 9 Mikhail Korobov

- 9 Steven De Gryze

- 8 sergeyf

- 7 Ben Root

- 7 Hrishikesh Huilgolkar

- 6 Kyle Kastner

- 6 Martin Luessi

- 6 Rob Speer

- 5 Federico Vaggi

- 5 Raul Garreta

- 5 Rob Zinkov

- 4 Ken Geis

- 3 A. Flaxman

- 3 Denton Cockburn

- 3 Dougal Sutherland

- 3 Ian Ozsvald

- 3 Johannes Schönberger

- 3 Robert McGibbon

- 3 Roman Sinayev

- 3 Szabo Roland

- 2 Diego Molla

- 2 Imran Haque

- 2 Jochen Wersdörfer

- 2 Sergey Karayev

- 2 Yannick Schwartz

- 2 jamestwebber

- 1 Abhijeet Kolhe

- 1 Alexander Fabisch

- 1 Bastiaan van den Berg

- 1 Benjamin Peterson

- 1 Daniel Velkov

- 1 Fazlul Shahriar

- 1 Felix Brockherde

- 1 Félix-Antoine Fortin

- 1 Harikrishnan S

- 1 Jack Hale

- 1 JakeMick

- 1 James McDermott

- 1 John Benediktsson

- 1 John Zwinck

- 1 Joshua Vredevoogd

- 1 Justin Pati

- 1 Kevin Hughes

- 1 Kyle Kelley

- 1 Matthias Ekman

- 1 Miroslav Shubernetskiy

- 1 Naoki Orii

- 1 Norbert Crombach

- 1 Rafael Cunha de Almeida

- 1 Rolando Espinoza La fuente

- 1 Seamus Abshere

- 1 Sergey Feldman

- 1 Sergio Medina

- 1 Stefano Lattarini

- 1 Steve Koch

- 1 Sturla Molden

- 1 Thomas Jarosch

- 1 Yaroslav Halchenko

Version 0.13.1¶

The 0.13.1 release only fixes some bugs and does not add any new functionality.

Changelog¶

- Fixed a testing error caused by the function

cross_validation.train_test_splitbeing interpreted as a test by Yaroslav Halchenko.- Fixed a bug in the reassignment of small clusters in the

cluster.MiniBatchKMeansby Gael Varoquaux.- Fixed default value of

gammaindecomposition.KernelPCAby Lars Buitinck.- Updated joblib to

0.7.0dby Gael Varoquaux.- Fixed scaling of the deviance in

ensemble.GradientBoostingClassifierby Peter Prettenhofer.- Better tie-breaking in

multiclass.OneVsOneClassifierby Andreas Müller.- Other small improvements to tests and documentation.

People¶

- List of contributors for release 0.13.1 by number of commits.

- 16 Lars Buitinck

- 12 Andreas Müller

- 8 Gael Varoquaux

- 5 Robert Marchman

- 3 Peter Prettenhofer

- 2 Hrishikesh Huilgolkar

- 1 Bastiaan van den Berg

- 1 Diego Molla

- 1 Gilles Louppe

- 1 Mathieu Blondel

- 1 Nelle Varoquaux

- 1 Rafael Cunha de Almeida

- 1 Rolando Espinoza La fuente

- 1 Vlad Niculae

- 1 Yaroslav Halchenko

Version 0.13¶

New Estimator Classes¶

dummy.DummyClassifieranddummy.DummyRegressor, two data-independent predictors by Mathieu Blondel. Useful to sanity-check your estimators. See Dummy estimators in the user guide. Multioutput support added by Arnaud Joly.decomposition.FactorAnalysis, a transformer implementing the classical factor analysis, by Christian Osendorfer and Alexandre Gramfort. See Factor Analysis in the user guide.feature_extraction.FeatureHasher, a transformer implementing the “hashing trick” for fast, low-memory feature extraction from string fields by Lars Buitinck andfeature_extraction.text.HashingVectorizerfor text documents by Olivier Grisel See Feature hashing and Vectorizing a large text corpus with the hashing trick for the documentation and sample usage.pipeline.FeatureUnion, a transformer that concatenates results of several other transformers by Andreas Müller. See FeatureUnion: composite feature spaces in the user guide.random_projection.GaussianRandomProjection,random_projection.SparseRandomProjectionand the functionrandom_projection.johnson_lindenstrauss_min_dim. The first two are transformers implementing Gaussian and sparse random projection matrix by Olivier Grisel and Arnaud Joly. See Random Projection in the user guide.kernel_approximation.Nystroem, a transformer for approximating arbitrary kernels by Andreas Müller. See Nystroem Method for Kernel Approximation in the user guide.preprocessing.OneHotEncoder, a transformer that computes binary encodings of categorical features by Andreas Müller. See Encoding categorical features in the user guide.linear_model.PassiveAggressiveClassifierandlinear_model.PassiveAggressiveRegressor, predictors implementing an efficient stochastic optimization for linear models by Rob Zinkov and Mathieu Blondel. See Passive Aggressive Algorithms in the user guide.ensemble.RandomTreesEmbedding, a transformer for creating high-dimensional sparse representations using ensembles of totally random trees by Andreas Müller. See Totally Random Trees Embedding in the user guide.manifold.SpectralEmbeddingand functionmanifold.spectral_embedding, implementing the “laplacian eigenmaps” transformation for non-linear dimensionality reduction by Wei Li. See Spectral Embedding in the user guide.isotonic.IsotonicRegressionby Fabian Pedregosa, Alexandre Gramfort and Nelle Varoquaux,

Changelog¶

metrics.zero_one_loss(formerlymetrics.zero_one) now has option for normalized output that reports the fraction of misclassifications, rather than the raw number of misclassifications. By Kyle Beauchamp.tree.DecisionTreeClassifierand all derived ensemble models now support sample weighting, by Noel Dawe and Gilles Louppe.- Speedup improvement when using bootstrap samples in forests of randomized trees, by Peter Prettenhofer and Gilles Louppe.

- Partial dependence plots for Gradient Tree Boosting in

ensemble.partial_dependence.partial_dependenceby Peter Prettenhofer. See Partial Dependence Plots for an example.- The table of contents on the website has now been made expandable by Jaques Grobler.

feature_selection.SelectPercentilenow breaks ties deterministically instead of returning all equally ranked features.feature_selection.SelectKBestandfeature_selection.SelectPercentileare more numerically stable since they use scores, rather than p-values, to rank results. This means that they might sometimes select different features than they did previously.- Ridge regression and ridge classification fitting with

sparse_cgsolver no longer has quadratic memory complexity, by Lars Buitinck and Fabian Pedregosa.- Ridge regression and ridge classification now support a new fast solver called

lsqr, by Mathieu Blondel.- Speed up of

metrics.precision_recall_curveby Conrad Lee.- Added support for reading/writing svmlight files with pairwise preference attribute (qid in svmlight file format) in

datasets.dump_svmlight_fileanddatasets.load_svmlight_fileby Fabian Pedregosa.- Faster and more robust

metrics.confusion_matrixand Clustering performance evaluation by Wei Li.cross_validation.cross_val_scorenow works with precomputed kernels and affinity matrices, by Andreas Müller.- LARS algorithm made more numerically stable with heuristics to drop regressors too correlated as well as to stop the path when numerical noise becomes predominant, by Gael Varoquaux.

- Faster implementation of

metrics.precision_recall_curveby Conrad Lee.- New kernel

metrics.chi2_kernelby Andreas Müller, often used in computer vision applications.- Fix of longstanding bug in

naive_bayes.BernoulliNBfixed by Shaun Jackman.- Implemented

predict_probainmulticlass.OneVsRestClassifier, by Andrew Winterman.- Improve consistency in gradient boosting: estimators

ensemble.GradientBoostingRegressorandensemble.GradientBoostingClassifieruse the estimatortree.DecisionTreeRegressorinstead of thetree._tree.Treedata structure by Arnaud Joly.- Fixed a floating point exception in the decision trees module, by Seberg.

- Fix

metrics.roc_curvefails when y_true has only one class by Wei Li.- Add the

metrics.mean_absolute_errorfunction which computes the mean absolute error. Themetrics.mean_squared_error,metrics.mean_absolute_errorandmetrics.r2_scoremetrics support multioutput by Arnaud Joly.- Fixed

class_weightsupport insvm.LinearSVCandlinear_model.LogisticRegressionby Andreas Müller. The meaning ofclass_weightwas reversed as erroneously higher weight meant less positives of a given class in earlier releases.- Improve narrative documentation and consistency in

sklearn.metricsfor regression and classification metrics by Arnaud Joly.- Fixed a bug in

sklearn.svm.SVCwhen using csr-matrices with unsorted indices by Xinfan Meng and Andreas Müller.MiniBatchKMeans: Add random reassignment of cluster centers with little observations attached to them, by Gael Varoquaux.

API changes summary¶

- Renamed all occurrences of

n_atomston_componentsfor consistency. This applies todecomposition.DictionaryLearning,decomposition.MiniBatchDictionaryLearning,decomposition.dict_learning,decomposition.dict_learning_online.- Renamed all occurrences of

max_iterstomax_iterfor consistency. This applies tosemi_supervised.LabelPropagationandsemi_supervised.label_propagation.LabelSpreading.- Renamed all occurrences of

learn_ratetolearning_ratefor consistency inensemble.BaseGradientBoostingandensemble.GradientBoostingRegressor.- The module

sklearn.linear_model.sparseis gone. Sparse matrix support was already integrated into the “regular” linear models.sklearn.metrics.mean_square_error, which incorrectly returned the accumulated error, was removed. Usemean_squared_errorinstead.- Passing

class_weightparameters tofitmethods is no longer supported. Pass them to estimator constructors instead.- GMMs no longer have

decodeandrvsmethods. Use thescore,predictorsamplemethods instead.- The

solverfit option in Ridge regression and classification is now deprecated and will be removed in v0.14. Use the constructor option instead.feature_extraction.text.DictVectorizernow returns sparse matrices in the CSR format, instead of COO.- Renamed

kincross_validation.KFoldandcross_validation.StratifiedKFoldton_folds, renamedn_bootstrapston_iterincross_validation.Bootstrap.- Renamed all occurrences of

n_iterationston_iterfor consistency. This applies tocross_validation.ShuffleSplit,cross_validation.StratifiedShuffleSplit,utils.randomized_range_finderandutils.randomized_svd.- Replaced

rhoinlinear_model.ElasticNetandlinear_model.SGDClassifierbyl1_ratio. Therhoparameter had different meanings;l1_ratiowas introduced to avoid confusion. It has the same meaning as previouslyrhoinlinear_model.ElasticNetand(1-rho)inlinear_model.SGDClassifier.linear_model.LassoLarsandlinear_model.Larsnow store a list of paths in the case of multiple targets, rather than an array of paths.- The attribute

gmmofhmm.GMMHMMwas renamed togmm_to adhere more strictly with the API.cluster.spectral_embeddingwas moved tomanifold.spectral_embedding.- Renamed

eig_tolinmanifold.spectral_embedding,cluster.SpectralClusteringtoeigen_tol, renamedmodetoeigen_solver.- Renamed

modeinmanifold.spectral_embeddingandcluster.SpectralClusteringtoeigen_solver.classes_andn_classes_attributes oftree.DecisionTreeClassifierand all derived ensemble models are now flat in case of single output problems and nested in case of multi-output problems.- The

estimators_attribute ofensemble.gradient_boosting.GradientBoostingRegressorandensemble.gradient_boosting.GradientBoostingClassifieris now an array of :class:’tree.DecisionTreeRegressor’.- Renamed

chunk_sizetobatch_sizeindecomposition.MiniBatchDictionaryLearninganddecomposition.MiniBatchSparsePCAfor consistency.svm.SVCandsvm.NuSVCnow provide aclasses_attribute and support arbitrary dtypes for labelsy. Also, the dtype returned bypredictnow reflects the dtype ofyduringfit(used to benp.float).- Changed default test_size in

cross_validation.train_test_splitto None, added possibility to infertest_sizefromtrain_sizeincross_validation.ShuffleSplitandcross_validation.StratifiedShuffleSplit.- Renamed function

sklearn.metrics.zero_onetosklearn.metrics.zero_one_loss. Be aware that the default behavior insklearn.metrics.zero_one_lossis different fromsklearn.metrics.zero_one:normalize=Falseis changed tonormalize=True.- Renamed function

metrics.zero_one_scoretometrics.accuracy_score.datasets.make_circlesnow has the same number of inner and outer points.- In the Naive Bayes classifiers, the

class_priorparameter was moved fromfitto__init__.

People¶

List of contributors for release 0.13 by number of commits.

- 364 Andreas Müller

- 143 Arnaud Joly

- 137 Peter Prettenhofer

- 131 Gael Varoquaux

- 117 Mathieu Blondel

- 108 Lars Buitinck

- 106 Wei Li

- 101 Olivier Grisel

- 65 Vlad Niculae

- 54 Gilles Louppe

- 40 Jaques Grobler

- 38 Alexandre Gramfort

- 30 Rob Zinkov

- 19 Aymeric Masurelle

- 18 Andrew Winterman

- 17 Fabian Pedregosa

- 17 Nelle Varoquaux

- 16 Christian Osendorfer

- 14 Daniel Nouri

- 13 Virgile Fritsch

- 13 syhw

- 12 Satrajit Ghosh

- 10 Corey Lynch

- 10 Kyle Beauchamp

- 9 Brian Cheung

- 9 Immanuel Bayer

- 9 mr.Shu

- 8 Conrad Lee

- 8 James Bergstra

- 7 Tadej Janež

- 6 Brian Cajes

- 6 Jake Vanderplas

- 6 Michael

- 6 Noel Dawe

- 6 Tiago Nunes

- 6 cow

- 5 Anze

- 5 Shiqiao Du

- 4 Christian Jauvin

- 4 Jacques Kvam

- 4 Richard T. Guy

- 4 Robert Layton

- 3 Alexandre Abraham

- 3 Doug Coleman

- 3 Scott Dickerson